Fortuna¶

A Library for Uncertainty Quantification¶

Proper estimation of predictive uncertainty is fundamental in applications that involve critical decisions. Uncertainty can be used to assess reliability of model predictions, trigger human intervention, or decide whether a model can be safely deployed in the wild.

Fortuna provides calibrated uncertainty estimates of model predictions, in classification and regression. It is designed to be easy-to-use, and to promote effortless estimation of uncertainty in production systems.

Quickstart¶

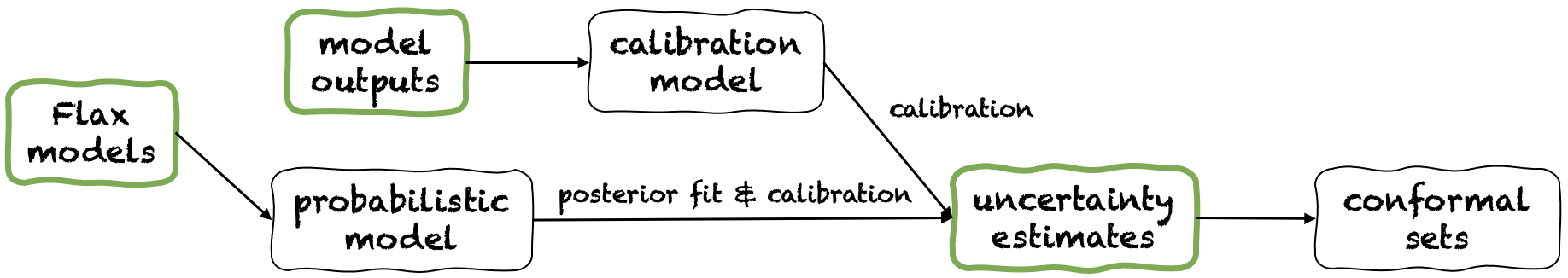

Fortuna offers three different usage modes: From uncertainty estimates, From model outputs and From Flax models. These serve users according to the constraints dictated by their own applications. Their pipelines are depicted in the following figure, each starting from one of the green panels.

The following sections offer a glance over each of the usage modes. See Usage modes for more details.

From uncertainty estimates¶

Starting from uncertainty estimates has minimal compatibility requirements and it is the quickest level of interaction with the library. This usage mode offers conformal prediction methods for both classification and regression. These take uncertainty estimates in input, and return rigorous sets of predictions that retain a user-given level of probability. In one-dimensional regression tasks, conformal sets may be thought as calibrated versions of confidence or credible intervals.

Mind that if the uncertainty estimates that you provide in inputs are inaccurate, conformal sets might be large and unusable. For this reason, if your application allows it, please consider the From model outputs and From Flax models usage modes.

Example. Suppose you want to calibrate credible intervals with coverage error error,

each corresponding to a different test input variable.

We assume that credible intervals are passed as arrays of lower and upper bounds,

respectively test_lower_bounds and test_upper_bounds.

You also have lower and upper bounds of credible intervals computed for several validation inputs,

respectively val_lower_bounds and val_upper_bounds.

The corresponding array of validation targets is denoted by val_targets.

The following code produces conformal prediction intervals,

i.e. calibrated versions of you test credible intervals.

conformal_interval()¶from fortuna.conformal import QuantileConformalRegressor

conformal_intervals = QuantileConformalRegressor().conformal_interval(

val_lower_bounds=val_lower_bounds, val_upper_bounds=val_upper_bounds,

test_lower_bounds=test_lower_bounds, test_upper_bounds=test_upper_bounds,

val_targets=val_targets, error=error)

From model outputs¶

Starting from model outputs assumes you have already trained a model in some framework,

and arrive to Fortuna with model outputs in numpy.ndarray format for each input data point.

This usage mode allows you to calibrate your model outputs, estimate uncertainty,

compute metrics and obtain conformal sets.

Compared to the From uncertainty estimates usage mode, this one offers better control, as it can make sure uncertainty estimates have been appropriately calibrated. However, if the model had been trained with classical methods, the resulting quantification of model (a.k.a. epistemic) uncertainty may be poor. To mitigate this problem, please consider the From Flax models usage mode.

Example.

Suppose you have calibration and test model outputs,

respectively calib_outputs and test_outputs.

Furthermore, you have some arrays of calibration target variables calib_targets.

The following code provides a minimal classification example to get calibrated predictive entropy estimates.

OutputCalibClassifier, calibrate(), entropy()¶from fortuna.output_calib_model import OutputCalibClassifier

calib_model = OutputCalibClassifier()

status = calib_model.calibrate(calib_outputs=calib_outputs, calib_targets=calib_targets)

test_entropies = calib_model.predictive.entropy(outputs=test_outputs)

From Flax models¶

Starting from Flax models has higher compatibility requirements than the From uncertainty estimates and From model outputs usage modes, as it requires deep learning models written in Flax. However, it enables you to replace standard model training with scalable Bayesian inference procedures, which may significantly improve the quantification of predictive uncertainty.

Example. Suppose you have a Flax classification deep learning model model from inputs to logits, with output

dimension given by output_dim. Furthermore,

you have some training, validation and calibration TensorFlow data loader train_data_loader, val_data_loader

and test_data_loader, respectively.

The following code provides a minimal classification example to get calibrated probability estimates.

from_tensorflow_data_loader(), ProbClassifier, train(), mean()¶from fortuna.data import DataLoader

train_data_loader = DataLoader.from_tensorflow_data_loader(train_data_loader)

calib_data_loader = DataLoader.from_tensorflow_data_loader(val_data_loader)

test_data_loader = DataLoader.from_tensorflow_data_loader(test_data_loader)

from fortuna.prob_model import ProbClassifier

prob_model = ProbClassifier(model=model)

status = prob_model.train(train_data_loader=train_data_loader, calib_data_loader=calib_data_loader)

test_means = prob_model.predictive.mean(inputs_loader=test_data_loader.to_inputs_loader())

Installation¶

NOTE: Before installing Fortuna, you are required to install JAX in your virtual environment.

You can install Fortuna by typing

pip install aws-fortuna

Alternatively, you can build the package using Poetry. If you choose to pursue this way, first install Poetry and add it to your PATH (see here). Then type

poetry install

All the dependencies will be installed at their required versions.

If you also want to install the optional Sphinx dependencies to build the documentation,

add the flag -E docs to the command above.

Finally, you can either access the virtualenv that Poetry created by typing poetry shell,

or execute commands within the virtualenv using the run command, e.g. poetry run python.

License¶

This project is licensed under the Apache-2.0 License.